How to make the most of Single Take with the Galaxy S22 AI Camera

No matter how quickly you try to seize the moment with your camera, there’s still a chance you may not fully capture the scene. The Single Take function, which was first introduced in the Galaxy S20 series, uses an AI process to take multiple photos and videos at the same time. This function helps you to capture those lost moments when you can’t press the shutter quickly enough.

How much more has the Galaxy S22’s Single Take with enhanced AI function evolved? Get a closer look at what the Galaxy S22's AI camera Single Take has to offer.

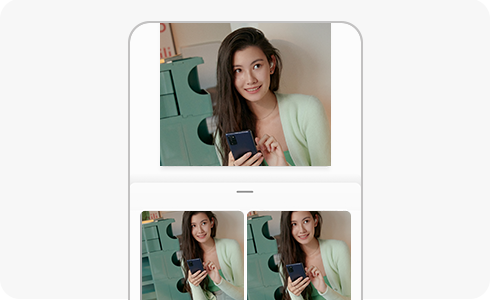

Based on smarter and more powerful AI, the Galaxy S22’s Single Take ensures that precious moments that pass in the blink of an eye are not lost. It records video for up to 20 seconds while simultaneously snapping up to 10 pictures per second.

Once it’s done shooting, AI adds various effects to highlight the best photos and videos. It generates up to 10 photos and up to 4 video clips in real time.

- For photos, the shooting results are analysed, and corrections or effects can be applied immediately to maximise the advantages of the photo. If the picture is of a person or a pet, then “Portrait mode” is applied to emphasise the subject. When shooting lively moments or expansive scenery, you can use “Smart Crop” to highlight the most impressive parts of a photo or apply various AI filters to adjust colour and brightness.

- In the case of videos, AI analyses the movement and background in a scene. First, a fast forward (3x) or slow-motion video with a slow-motion effect added to the highlight section is basically created. Then, depending on the footage, it creates a unique video such as Boomerang, Reverse, and Highlight video, etc.

It is important to capture various scenes in one shot, but the ability to quickly select the best moments among them is also important. Single Take uses various AI engines based on deep learning and computer vision technology to quickly analyse and select the best photos.

There are many different types of engines that Single Take can use. First, the aesthetic engine learns about 300,000 pictures selected by experts and evaluates the picture's aesthetics, such as photo sharpness, picture quality, and facial expressions. For example, when taking a portrait photo, it chooses a photo with a wide smile instead of a photo with the subject’s eyes closed or head bowed. When the best photos are selected, AI uses the same deep learning technology to determine which filters and effects to apply, and automatically applies the best filters to your photos.

One of the factors that determines the completeness of a photo is composition. The Angle Evaluation engine learns the best composition based on a database of about 100 million images and crops the image accordingly. Lastly, when shooting portraits and pets, portrait mode is applied automatically, and it uses AI to separate the subject from the background while applying background effects such as blur.

In addition to photo and filter selection, Single Take utilises a variety of AI engines and makes intensive editing and processing processes fast and efficient. “Capture Intelligent” uses high-speed shooting according to the amount of light and enables the best shooting according to the situation. Also, the ‘person recognition intelligence’ function analyses the facial expression, posture, and head tilt of a person, and makes them stand out from the background. ‘Motion Intelligence' is also used in video shooting and finds the best moment in the motion of the video.

A video shot with Single Take utilises scene intelligence, capture intelligence, motion intelligence, facial recognition, and intelligence select, then collectively uses them to create the best photos and movements in your videos. When applying filters, transitions and BGM in the selected scene later, instantly shareable videos are created. Scenes with a lot of movement are analysed by motion intelligence, slow motion video, boomerang or rewind effects, and special moments are also highlighted.

There are useful features when you can't stay in one place for long, but you want to create a 24-hour time-lapse video that change from morning to night, to use in a single photo.

With the same scene, Network GAN can even create photos with light adjust for the actual time of day, such as sunrise, sunset, or something in-between. If you connect and convert these photos naturally, a 12-second time-lapse video is completed.

AI can analyse the sky, clouds, water, trees, and buildings in a photo, restoring them naturally to emphasise outlines and shapes. Or you can make a 24-hour time-lapse video that realistically depicts the passage of time, creating results that contain the flow of the day from a deep blue sky to a fantastic sunset.

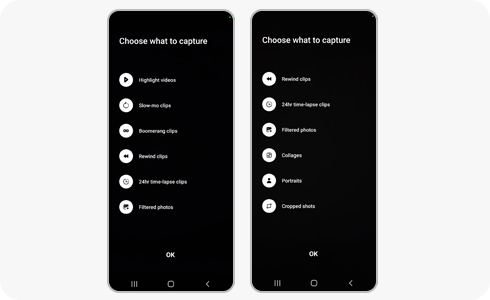

The shooting option setting menu was improved to be more intuitive, so it's easier to choose the content you want. There’s also an option to filter unnecessary content by deactivating the icon, so the content you don’t want will never be created.

If you are shooting in a bright place without movement, you’ll create much more content. Here are some tips on how to create videos for different types of content.

- Slow-motion video: It selects the section with the greatest change in the video and creates a slow-motion video. If you don’t want a slow-motion video, adjust the camera settings to shoot a moving video.

- 24-Hour Time-Lapse Video: If you’re shooting bright outdoor scenery, a 24-hour time-lapse video is automatically created. Try to move the camera slowly to capture more scenery.

- Highlights video: If you slowly move or place the camera in one spot, it will produce better results. And if you shoot in a bright place rather than a dark place, the frequency of output creation increases.

- Portrait photo: Single Take supports different Portrait Effects for both Humans and Pets. Position yourself at 1-2 meters distance to get the best results.

- Boomerang/Reverse Videos: Boomerang looks great when subjects (people or pets) and scenes (waterfalls or ocean waves) are moving in a scene. Our Reverse Outputs are especially funny when there are Moving Pets/Moving Cars in the scene.

The Galaxy S22 series helps users get the best photos and videos even more conveniently. In the future, artificial intelligent functions will be continuously expanded and developed.

Thank you for your feedback!

Please answer all questions.